Free course recommendation: Master JavaScript animation with GSAP through 34 free video lessons, step-by-step projects, and hands-on demos. Enroll now →

If you have written any WebGL applications in the past, be it using the vanilla API or a helper library such as Three.js, you know that you set up the things you want to render, perhaps include different types of cameras, animations and fancy lighting and voilà, the results are rendered to the default WebGL framebuffer, which is the device screen.

Framebuffers are a key feature in WebGL when it comes to creating advanced graphical effects such as depth-of-field, bloom, film grain or various types of anti-aliasing. They allow us to “post-process” our scenes, applying different effects on them once rendered.

This article assumes some intermediate knowledge of WebGL with Three.js. The core ideas behind framebuffers have already been covered in-depth in this article here on Codrops. Please make sure to read it first, as the persistence effect we will be achieving directly builds on top of these ideas.

Persistence effect in a nutshell

I call it persistence, yet am not really sure if it’s the best name for this effect and I am simply unaware of the proper way to call it. What is it useful for?

We can use it subtly to blend each previous and current animation frame together or perhaps a bit less subtly to hide bad framerate. Looking at video games like Grand Theft Auto, we can simulate our characters getting drunk. Another thing that comes to mind is rendering the view from the cockpit of a spacecraft when traveling at supersonic speed. Or, since the effect is just so good looking in my opinion, use it for all kinds of audio visualisers, cool website effects and so on.

To achieve it, first we would need to create 2 WebGL framebuffers. Since we will be using threejs for this demo, we would have to use THREE.WebGLRenderTarget. We will call them Framebuffer 1 and Framebuffer 2 from now on and they will have the same dimensions as the size of the canvas we are rendering to.

To draw one frame of our animation with persistence, we will need to:

- Render the contents of Framebuffer 1 to Framebuffer 2 with a shader that fades to a certain color. For the purposes of our demo, we will use a pure black color with full opacity

- Render our threejs scene that holds the actual meshes we want to show on the screen to Framebuffer 2 as well

- Render the contents of Framebuffer 2 to the Default WebGL framebuffer (device screen)

- Swap Framebuffer 1 with Framebuffer 2

Afterwards, for each new animation frame, we will need to repeat the above steps. Let’s illustrate each step:

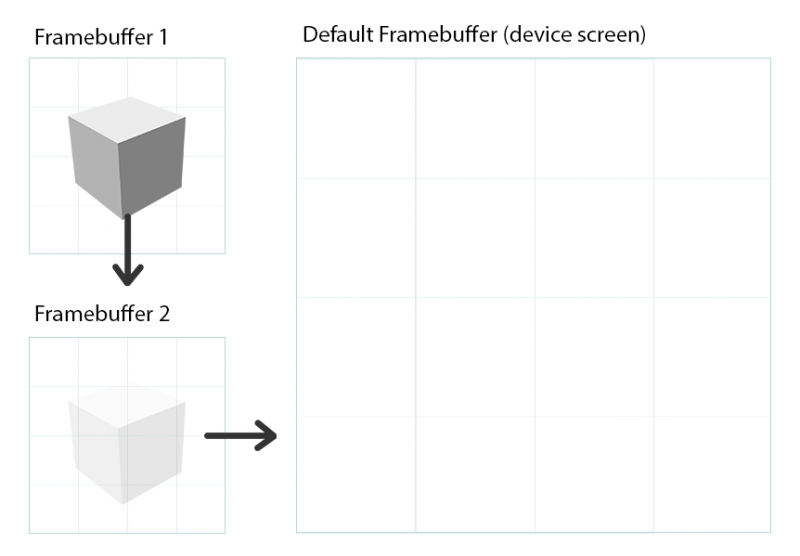

Here is a visualisation of our framebuffers. WebGL gives us the Default framebuffer, represented by the device screen, automatically. It’s up to us as developers to manually create Framebuffer 1 and Framebuffer 2. No animation or rendering has happened yet, so the pixel contents of all 3 framebuffers are empty.

Step 1: we need to render the contents of Framebuffer 1 to Framebuffer 2 with a shader that fades to a certain color. As said, we will use a black color, but for illustration purposes I am fading out to transparent white color with opacity 0.2. As Framebuffer 1 is empty, this will result in empty Framebuffer 2:

Step 2: we need to render our threejs scene that holds our meshes / cameras / lighting / etc to Framebuffer 2. Please notice that both Step 1 and Step 2 render on top of each other to Framebuffer 2.

Step 3: After we have successfully rendered Step 1 and Step 2 to Framebuffer 2, we need to render Framebuffer 2 itself to the Default framebuffer:

Step 4: Now we need to swap Framebuffer 1 with Framebuffer 2. We then clear Framebuffer 1 and the Default framebuffer:

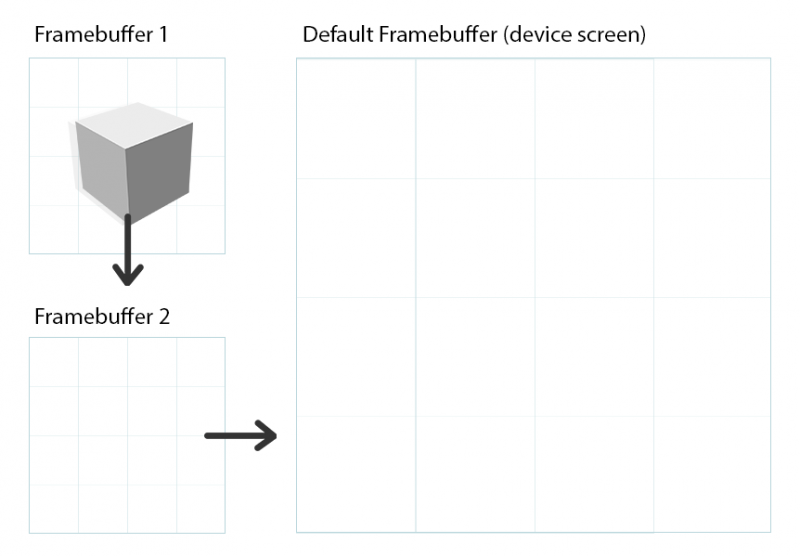

Now comes the interesting part, since Framebuffer 1 is no longer empty. Let’s go over each step once again:

Step 1: we need to render the contents of Framebuffer 1 to Framebuffer 2 with a shader that fades to a certain color. Let’s assume a transparent white color with 0.2 opacity.

Step 2: we need to render our threejs scene to Framebuffer 2. For illustration purposes, let’s assume we have an animation that slowly moves our 3D cube to the right, meaning that now it will be a few pixels to the right:

Step 3: After we have successfully rendered Step 1 and Step 2 to Framebuffer 2, we need to render Framebuffer 2 itself to the Default framebuffer:

Step 4: Now we need to swap Framebuffer 1 with Framebuffer 2. We then clear Framebuffer 1 and the Default framebuffer:

I hope you can see a pattern emerging. If we repeat this process enough times, we will start accumulating each new frame to the previous faded one. Here is how it would look if we repeat enough times:

Here is the demo we will build in this article. The result of the repeated enough times process above is evident:

So with this theory out of the way, let’s create this effect with threejs!

Our skeleton app

Let’s write a simple threejs app that will animate a bunch of objects around the screen and use perspective camera to look at them. I will not explain the code for my example here, as it does not really matter what we render, as long as there is some animation present so things move and we can observe the persistence.

I encourage you to disregard my example and draw something else yourself. Even the most basic spinning cube that moves around the screen will be enough. That being said, here is my scene:

See the Pen 1. Skeleton app by Georgi Nikoloff (@gbnikolov) on CodePen.

The important thing to keep in mind here is that this demo renders to the default framebuffer, represented by the device screen, that WebGL automatically gives us. There are no extra framebuffers involved in this demo up to this point.

Achieving the persistence

Let’s add the code needed to achieve actual persistence. We will start by introducing a THREE.OrthographicCamera.

Orthographic camera can be useful for rendering 2D scenes and UI elements, amongst other things.

threejs docs

Remember, framebuffers allow us to render to image buffers in the video card’s memory instead of the device screen. These image buffers are represented by the THREE.Texture class and are automatically created for us when we create our Framebuffer 1 and Framebuffer 2 by instantiating a new THREE.WebGLRenderTarget. In order to display these textures back to the device screen, we need to create two 2D fullscreen quads that span the width and height of our monitor. Since these quads will be 2D, THREE.OrthographicCamera is best suited to display them.

const leftScreenBorder = -innerWidth / 2

const rightScreenBorder = innerWidth / 2

const topScreenBorder = -innerHeight / 2

const bottomScreenBorder = innerHeight / 2

const near = -100

const far = 100

const orthoCamera = new THREE.OrthographicCamera(

leftScreenBorder,

rightScreenBorder,

topScreenBorder,

bottomScreenBorder,

near,

far

)

orthoCamera.position.z = -10

orthoCamera.lookAt(new THREE.Vector3(0, 0, 0))As a next step, let’s create a fullscreen quad geometry using THREE.PlaneGeometry:

const fullscreenQuadGeometry = new THREE.PlaneGeometry(innerWidth, innerHeight)Using our newly created 2D quad geometry, let’s create two fullscreen planes. I will call them fadePlane and resultPlane. They will use THREE.ShaderMaterial and THREE.MeshBasicMaterial respectively:

// To achieve the fading out to black, we will use THREE.ShaderMaterial

const fadeMaterial = new THREE.ShaderMaterial({

// Pass the texture result of our rendering to Framebuffer 1 as uniform variable

uniforms: {

inputTexture: { value: null }

},

vertexShader: `

// Declare a varying variable for texture coordinates

varying vec2 vUv;

void main () {

// Set each vertex position according to the

// orthographic camera position and projection

gl_Position = projectionMatrix * modelViewMatrix * vec4(position, 1.0);

// Pass the plane texture coordinates as interpolated varying

// variable to the fragment shader

vUv = uv;

}

`,

fragmentShader: `

// Pass the texture from Framebuffer 1

uniform sampler2D inputTexture;

// Consume the interpolated texture coordinates

varying vec2 vUv;

void main () {

// Get pixel color from texture

vec4 texColor = texture2D(inputTexture, vUv);

// Our fade-out color

vec4 fadeColor = vec4(0.0, 0.0, 0.0, 1.0);

// this step achieves the actual fading out

// mix texColor into fadeColor by a factor of 0.05

// you can change the value of the factor and see

// the result will change accordingly

gl_FragColor = mix(texColor, fadeColor, 0.05);

}

`

})

// Create our fadePlane

const fadePlane = new THREE.Mesh(

fullscreenQuadGeometry,

fadeMaterial

)

// create our resultPlane

// Please notice we don't use fancy shader materials for resultPlane

// We will use it simply to copy the contents of fadePlane to the device screen

// So we can just use the .map property of THREE.MeshBasicMaterial

const resultMaterial = new THREE.MeshBasicMaterial({ map: null })

const resultPlane = new THREE.Mesh(

fullscreenQuadGeometry,

resultMaterial

)We will use fadePlane to perform step 1 and step 2 from the list above (rendering the previous frame represented by Framebuffer 1, fading it out to black color and finally rendering our original threejs scene on top). We will render to Framebuffer 2 and update its corresponding THREE.Texture.

We will use the resulting texture of Framebuffer 2 as an input to <strong>resultPlane</strong>. This time, we will render to the device screen. We will essentially copy the contents of Framebuffer 2 to the Default Framebuffer (device screen), thus achieving step 3.

Up next, let’s actually create our Framebuffer 1 and Framebuffer 2. They are represented by THREE.WebGLRenderTarget:

// Create two extra framebuffers manually

// It is important we use let instead of const variables,

// as we will need to swap them as discussed in Step 4!

let framebuffer1 = new THREE.WebGLRenderTarget(innerWidth, innerHeight)

let framebuffer2 = new THREE.WebGLRenderTarget(innerWidth, innerHeight)

// Before we start using these framebuffers by rendering to them,

// let's explicitly clear their pixel contents to #111111

// If we don't do this, our persistence effect will end up wrong,

// due to how accumulation between step 1 and 3 works.

// The first frame will never fade out when we mix Framebuffer 1 to

// Framebuffer 2 and will be always visible.

// This bug is better observed, rather then explained, so please

// make sure to comment out these lines and see the change for yourself.

renderer.setClearColor(0x111111)

renderer.setRenderTarget(framebuffer1)

renderer.clearColor()

renderer.setRenderTarget(framebuffer2)

renderer.clearColor()As you might have guessed already, we will achieve step 4 as described above by swapping framebuffer1 and framebuffer2 at the end of each animation frame.

At this point we have everything ready and initialised: our THREE.OrthographicCamera, our 2 quads that will fade out and copy the contents of our framebuffers to the device screens and, of course, the framebuffers themselves. It should be noted that up until this point we did not change our animation loop code and logic, rather we just created these new things at the initialisation step of our program. Let’s now put them to practice in our rendering loop.

Here is how my function that is executed on each animation frame looks like right now. Taken directly from the codepen example above:

function drawFrame (timeElapsed) {

for (let i = 0; i < meshes.length; i++) {

const mesh = meshes[i]

// some animation logic that moves each mesh around the screen

// with different radius, offset and speed

// ...

}

// Render our entire scene to the device screen, represented by

// the default WebGL framebuffer

renderer.render(scene, perspectiveCamera)

}If you have written any threejs code before, this drawFrame method should not be any news to you. We apply some animation logic to our meshes and then render them to the device screen by calling renderer.render() on the whole scene with the appropriate camera.

Let’s incorporate our steps 1 to 4 from above and achieve our persistence:

function drawFrame (timeElapsed) {

// The for loop remains unchanged from the previous snippet

for (let i = 0; i < meshes.length; i++) {

// ...

}

// By default, threejs clears the pixel color buffer when

// calling renderer.render()

// We want to disable it explicitly, since both step 1 and step 2 render

// to Framebuffer 2 accumulatively

renderer.autoClearColor = false

// Set Framebuffer 2 as active WebGL framebuffer to render to

renderer.setRenderTarget(framebuffer2)

// <strong>Step 1</strong>

// Render the image buffer associated with Framebuffer 1 to Framebuffer 2

// fading it out to pure black by a factor of 0.05 in the fadeMaterial

// fragment shader

fadePlane.material.uniforms.inputTexture.value = framebuffer1.texture

renderer.render(fadePlane, orthoCamera)

// <strong>Step 2</strong>

// Render our entire scene to Framebuffer 2, on top of the faded out

// texture of Framebuffer 1.

renderer.render(scene, perspectiveCamera)

// Set the Default Framebuffer (device screen) represented by null as active WebGL framebuffer to render to.

renderer.setRenderTarget(null)

// <strong>Step 3</strong>

// Copy the pixel contents of Framebuffer 2 by passing them as a texture

// to resultPlane and rendering it to the Default Framebuffer (device screen)

resultPlane.material.map = framebuffer2.texture

renderer.render(resultPlane, orthoCamera)

// <strong>Step 4</strong>

// Swap Framebuffer 1 and Framebuffer 2

const swap = framebuffer1

framebuffer1 = framebuffer2

framebuffer2 = swap

// End of the effect

// When the next animation frame is executed, the meshes will be animated

// and the whole process will repeat

}And with these changes out of the way, here is our updated example using persistence:

See the Pen 2. Persistence by Georgi Nikoloff (@gbnikolov) on CodePen.

Applying texture transformations

Now that we have our effect properly working, we can get more creative and expand on top of it.

You might remember this snippet from the fragment shader code where we faded out the contents of Framebuffer 1 to Framebuffer 2:

void main () {

// Get pixel color from texture

vec4 texColor = texture2D(inputTexture, vUv);

// Our fade-out color

vec4 fadeColor = vec4(0.0, 0.0, 0.0, 1.0);

// mix texColor into fadeColor by a factor of 0.05

gl_FragColor = mix(texColor, fadeColor, 0.05);

}When we sample from our inputTexture, we can upscale our texture coordinates by a factor of 0.0075 like so:

vec4 texColor = texture2D(inputTexture, vUv * 0.9925);With this transformation applied to our texture coordinates, here is our updated example:

See the Pen 3. Peristence with upscaled texture coords by Georgi Nikoloff (@gbnikolov) on CodePen.

Or how about increasing our fade factor from 0.05 to 0.2?

gl_FragColor = mix(texColor, fadeColor, 0.2);This will intensify the effect of fadeColor by a magnitude of four, thus decreasing our persistence effect:

See the Pen 4. Reduced persistence by Georgi Nikoloff (@gbnikolov) on CodePen.

But why stop there? Here is a final demo that provides you with UI controls to tweak the scale, rotation and fade factor parameters in the demo. It uses THREE.Matrix3 and more specifically its setUvTransform method that allows us to express the translation, scale and rotation of our texture coordinates as a 3×3 matrix.

We can then pass this 3×3 as another uniform to our vertex shader and apply it to the texture coordinates. Here is the updated fragmentShader property of our fadeMaterial:

// pass the texture coordinate matrix as another uniform variable

uniform mat3 uvMatrix;

varying vec2 vUv;

void main () {

gl_Position = projectionMatrix * modelViewMatrix * vec4(position, 1.0);

// Since our texture coordinates, represented by uv, are a vector with

// 2 floats and our matrix holds 9 floats, we need to temporarily

// add extra dimension to the texture coordinates to make the

// multiplication possible.

// In the end, we simply grab the .xy of the final result, thus

// transforming it back to vec2

vUv = (uvMatrix * vec3(uv, 1.0)).xy;

}And here is the result. I also added controls for the different parameters:

See the Pen 5. Parameterised persistence by Georgi Nikoloff (@gbnikolov) on CodePen.

Conclusion

Framebuffers are a powerful tool in WebGL that allows us to greatly enhance our scenes via post-processing and achieve all kinds of cool effects. Some techniques require more then one framebuffer as we saw and it is up to us as developers to mix and match them however we need to achieve our desired visuals.

Further readings: